Supercomputers are a class of high-performance systems comprised of multiple central processing units (CPUs) in order to achieve high computational rates. They achieve fastest performance at a given time by using vector arithmetic which enables the system to operate on pairs of lists of numbers instead of simple pairs of numbers. For instance, if we want to calculate body mass index (BMI=Weight of the person/Height of the person) of a group of thousand people, the general-purpose computing would use loops to operate on an array of floating-point numbers and thereby often lead to performance overhead to the processor. Whereas vector processing in supercomputers independently operates on all the elements of the array in parallel. The codes below demonstrate how a general-purpose computing and super computing performs this task of calculating the BMI of 1000 people and storing it in another memory location.

The general processing performs using the following pseudocode,

Loop 1000 iterations

For i= 1 to 1000

Height= H(i)

Weight=W(i)

BMI=Height/Weight

Store B(i) = BMI

i=i+1

Whereas supercomputing avoids the loop and perform this operation in just few steps using parallel computing as follows,

Read all element of height H

Read all elements of weight W

Perform H/W

Store BMI

This kind of commands which operates on multiple data using single instruction is called single instruction multiple data (SIMD) or widely known as vector instructions which are stored in memory locations called vector registers. The vector registers are capable of storing several data simultaneously and thereby helps to achieve high speed computational requirements in various engineering and scientific field.

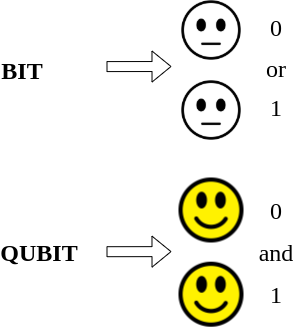

Till recent time, we relied on such supercomputers which uses classical computing. In classical computing, data is managed in terms of bits and bytes using zeros and ones. Hence it acts like a switch with two states, either on or off. This limits to probe multiple possibilities simultaneously. Quantum bits or qubits are the fundamental unit of quantum information in quantum computing in place of bits and bytes in classical computing. Superposition principle on qubits helps quantum computers in achieving exponential speedup in data processing as compared in classical computers. In quantum computing, superposition principle allows the state to be at on and off at the same time through superposition of each state. By doing so, the superposition principle allows the qubit to explore multiple possibilities at the same time. For example, it can help to chose promising candidates for particular application in material design by exploring the multiple underlying physical phenomena. It is possible to make a series of qubits inextricably linked together to represent various things at the same time. So, if some exploration paths end up in a dead end, we need not track back and start from all over again. Moreover, in real life situations which demands to handle plentiful data points such as the case of arranging people, selection of menu, choosing clothes etc., supercomputers underperform once the required combinations and permutations for such arrangement gives large values. Quantum computers overcome this shortcoming as it can accommodate such huge problems by providing multidimensional spaces. In quantum computing, algorithms that follow the principle of wave interference in quantum mechanics are used to extract solutions of multidimensional spaces which represent the data. The obtained solutions using the quantum computing in this manner can be translate back to a user readable form.

Science of quantum computers

In quantum computers, interplay of quantum mechanical phenomenon such as interference and entanglement are applied on quantum bits to perform data processing. Qubits are quantum mechanical systems with two distinct states, which exist simultaneously unless the operation of measurement is performed on it. Superposition principle establishes that even if we cannot perceive the state of a quantum mechanical system at a given instant of time, the simultaneous existence of more than one state is possible. The super position principle makes the binary qubits to represent both zero and one simultaneously through the superposition of four states shown below,

| 00 | 01 |

| 10 | 11 |

As this type of representation support the coexistence of multiple states simultaneously in space and time, it in turn enables the quantum computers to handle large number of calculations at the same time.

Though quantum entanglement is presented as a complex quantum mechanical phenomenon with lots of implications, you can understand it as a quantum mechanical phenomenon used to describe the different quantum states with reference to each other and establish relationships among these states in a situation where the states are spatially separated. In simple words, in real life entanglement describes the aesthetic sense of interactions and relationships among people even if they are physically separated. When such entanglement is applied to quantum mechanical systems, it is termed as quantum entanglement though it has wider and deeper implications which is out of the scope of this article.

The control of quantum computer is a superconducting qubit. Superconductive technology is one of the proven paths to achieve efficient quantum computing in which superconductors are used to achieve quantum tunneling of electrons across the Josephson junction for the construction of qubits. The superconductors are cooled to the lowest possible temperature allowed by the laws of thermodynamics using super fluids. The control functions such as read, hold or change are realized by exposing the superconducting qubit to photons. The computational space is enlarged by superposition of qubits. Programmable gates help to accommodate complex problems in this vast computational space. The inherent random behavior of qubits is perfectly synchronized by quantum entanglement. Quantum algorithms are specifically designed to exploit quantum entanglement to solve particular problems. Designing of best algorithms for specific task in a meaningful way is the toughest part of quantum computing in addition to the decoherence of hardware platforms and optimization of error factors associated with large scale calculations. A breakthrough in quantum computing by overcoming these limitations is inevitable for the enlarging horizons of science and technology.

Pingback: Quantum AI